How Hugging Face achieved a 2x performance boost for Question Answering with DistilBERT in Node.js — The TensorFlow Blog

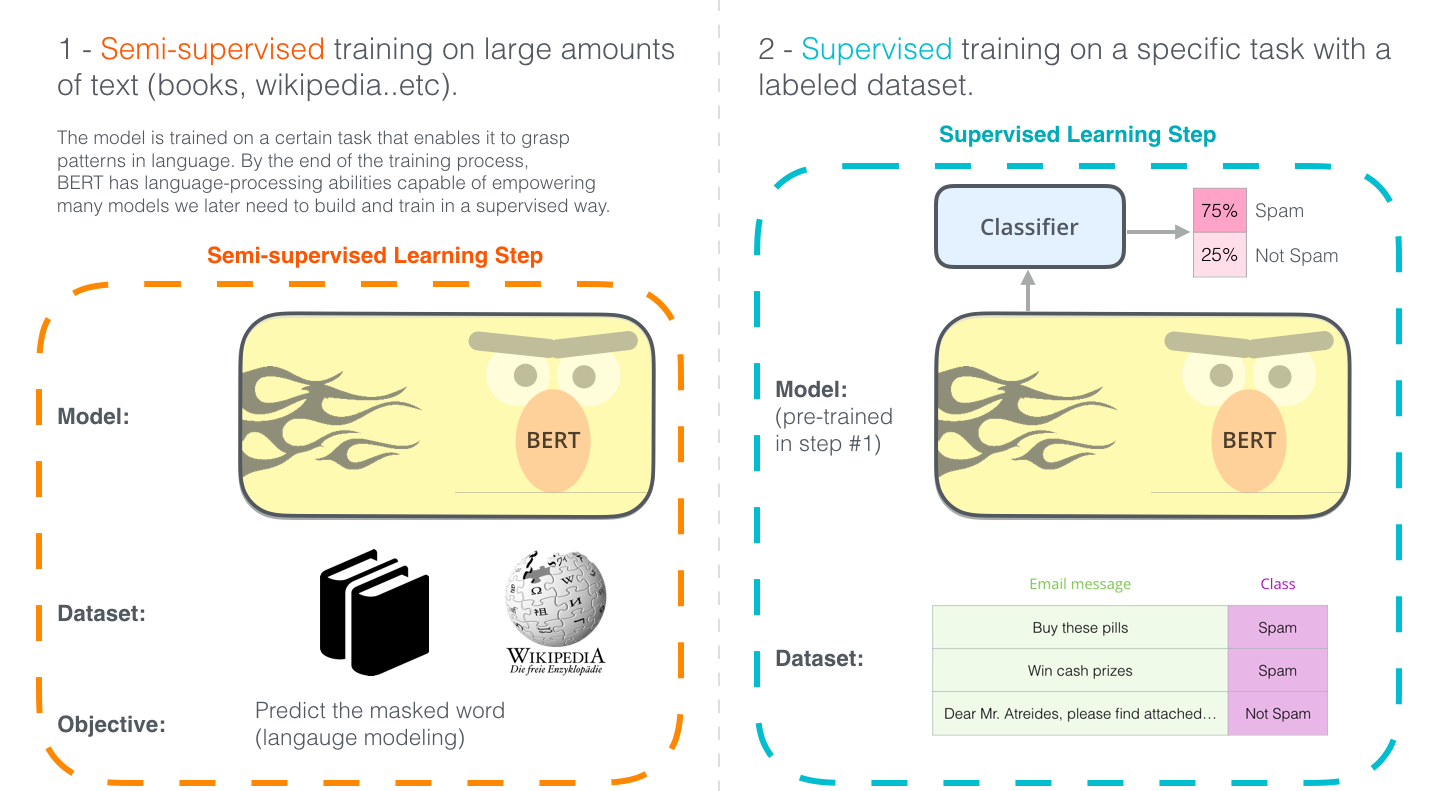

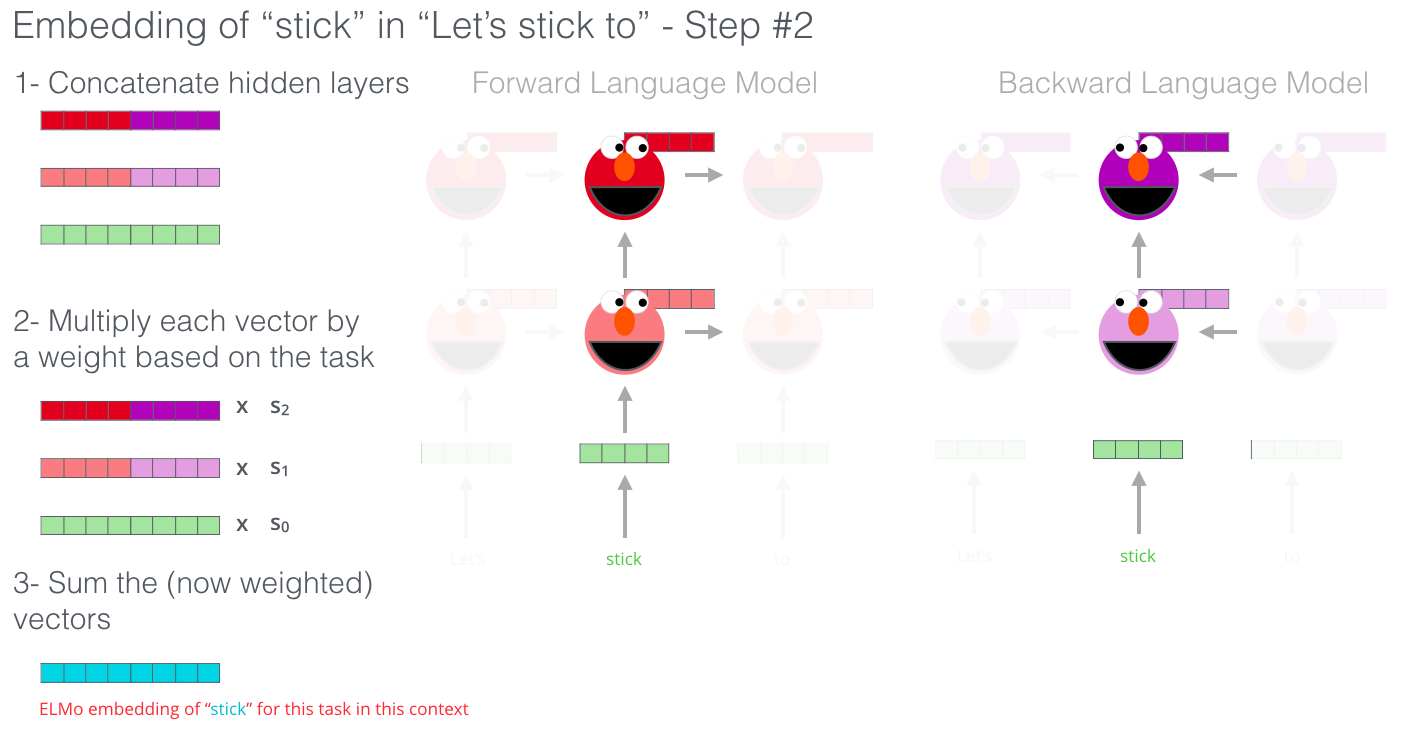

The Illustrated BERT, ELMo, and co. (How NLP Cracked Transfer Learning) – Jay Alammar – Visualizing machine learning one concept at a time.

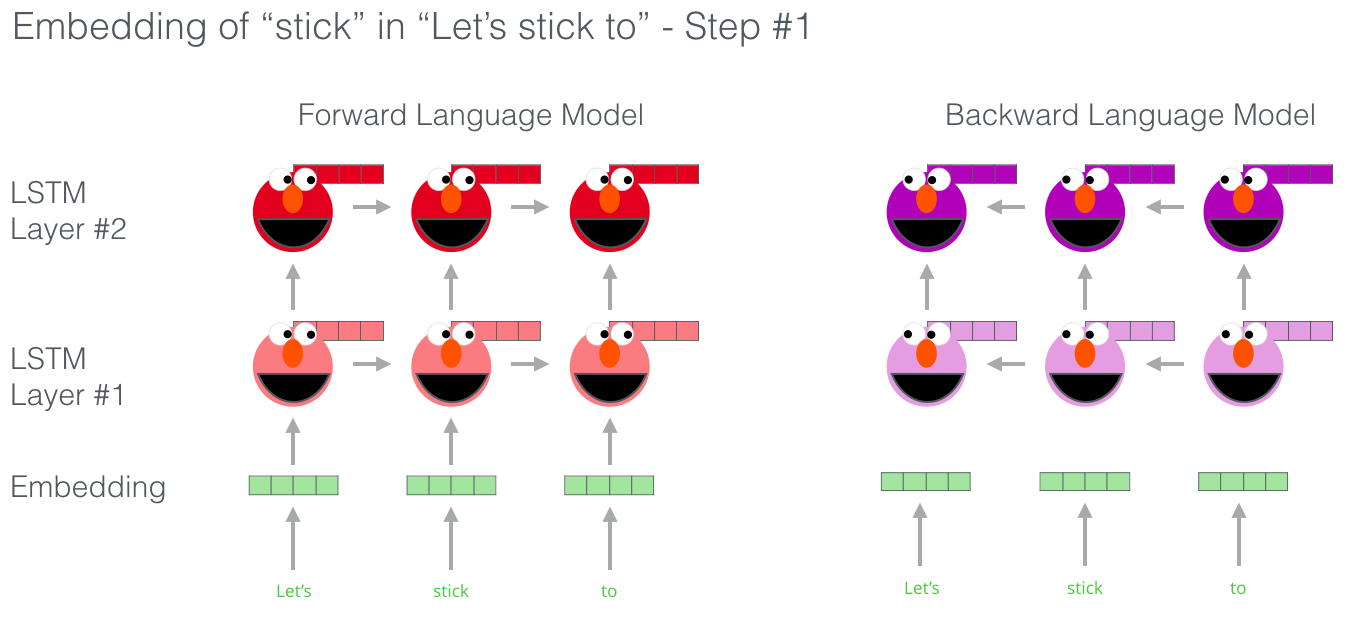

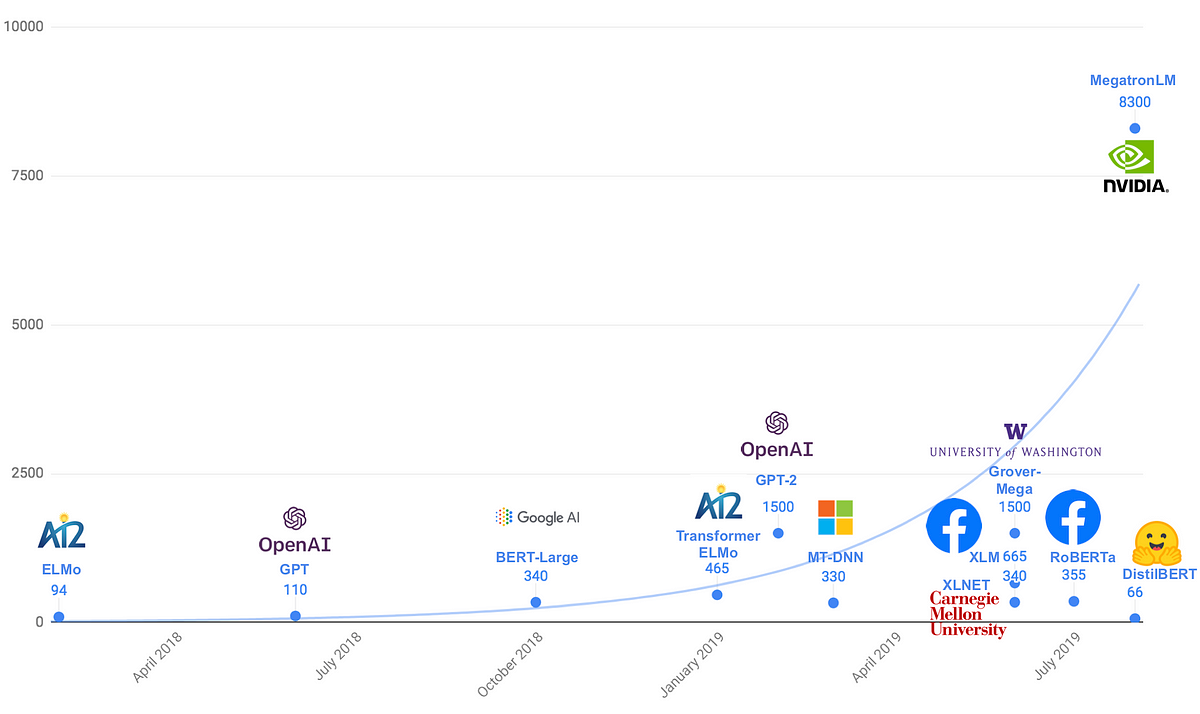

The Illustrated BERT, ELMo, and co. (How NLP Cracked Transfer Learning) – Jay Alammar – Visualizing machine learning one concept at a time.

The Illustrated BERT, ELMo, and co. (How NLP Cracked Transfer Learning) – Jay Alammar – Visualizing machine learning one concept at a time.

🏎 Smaller, faster, cheaper, lighter: Introducing DistilBERT, a distilled version of BERT | by Victor Sanh | HuggingFace | Medium

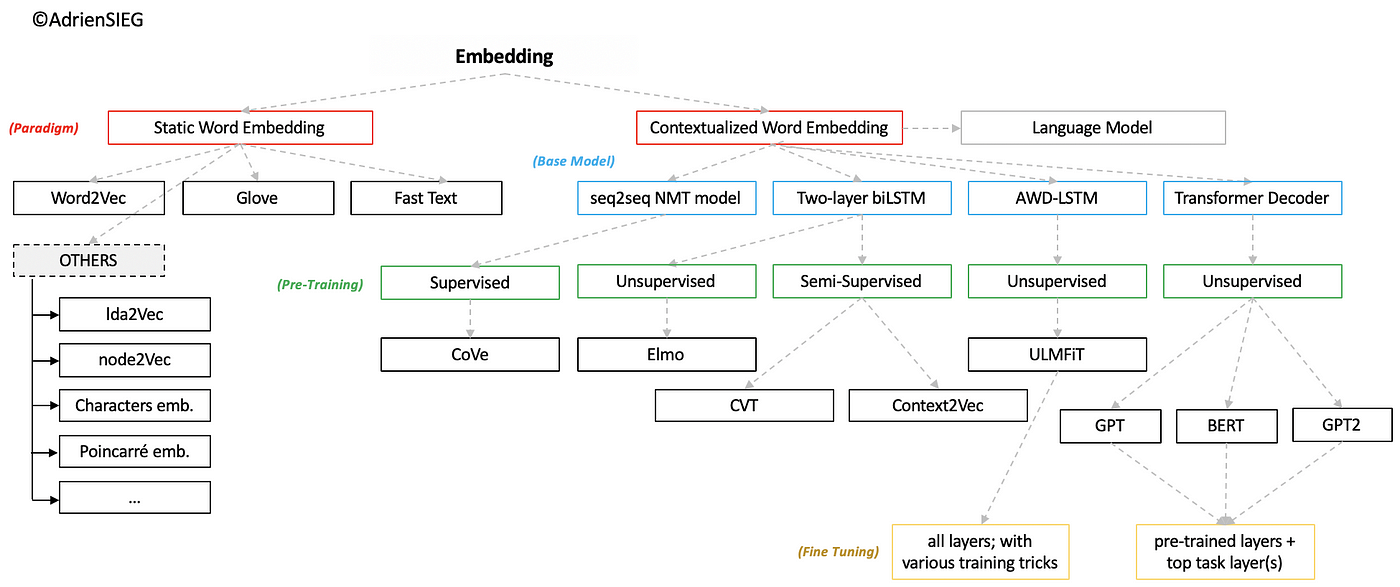

What are the main differences between the word embeddings of ELMo, BERT, Word2vec, and GloVe? - Quora

10 Things You Need to Know About BERT and the Transformer Architecture That Are Reshaping the AI Landscape - neptune.ai

The Illustrated BERT, ELMo, and co. (How NLP Cracked Transfer Learning) – Jay Alammar – Visualizing - Studocu

15.8. Bidirectional Encoder Representations from Transformers (BERT) — Dive into Deep Learning 1.0.0-beta0 documentation

Can GPT-3 or BERT Ever Understand Language?—The Limits of Deep Learning Language Models - neptune.ai

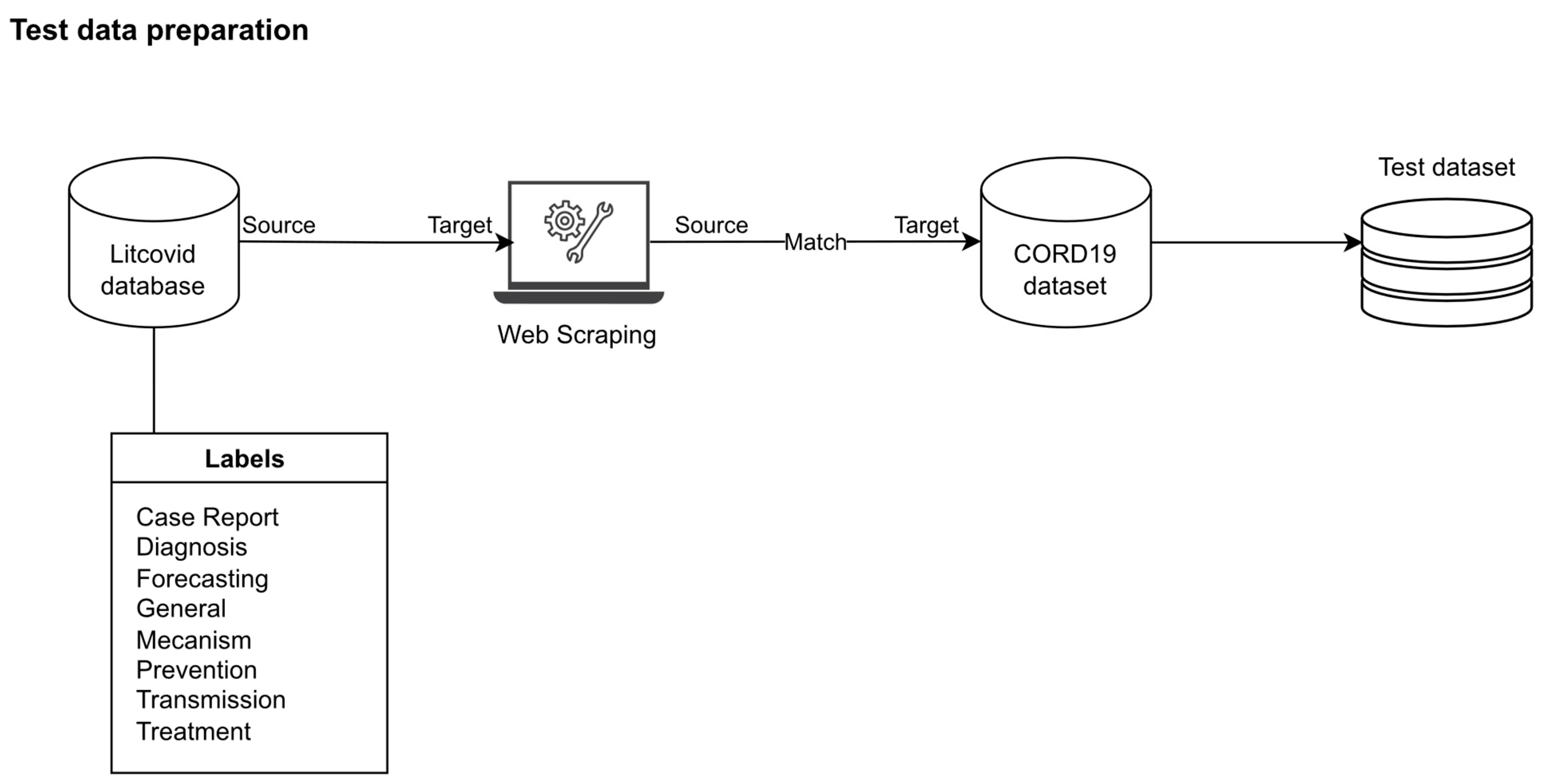

MAKE | Free Full-Text | Do We Need a Specific Corpus and Multiple High- Performance GPUs for Training the BERT Model? An Experiment on COVID-19 Dataset

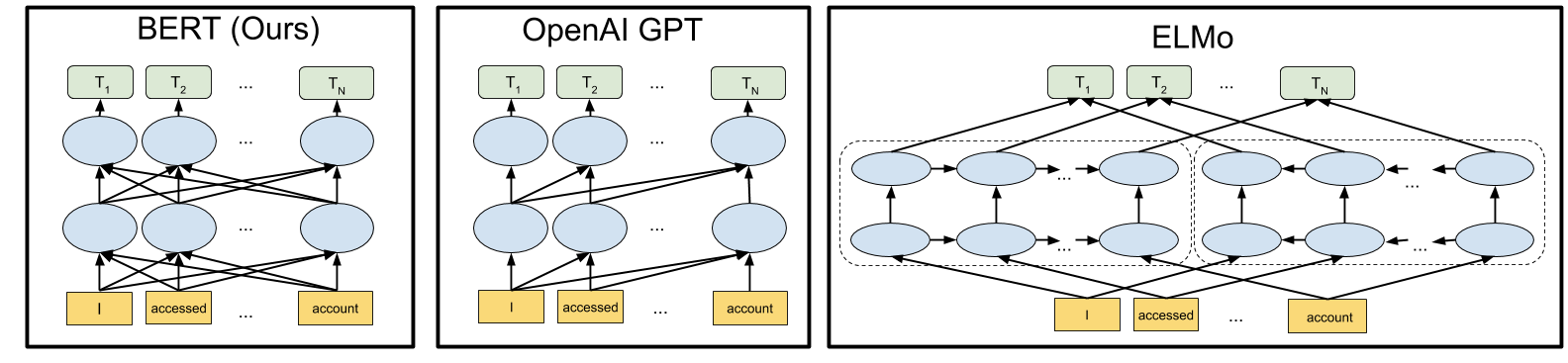

![Comparison of BERT, OpenAI GPT and ELMo model architectures [5]. | Download Scientific Diagram Comparison of BERT, OpenAI GPT and ELMo model architectures [5]. | Download Scientific Diagram](https://www.researchgate.net/publication/338931711/figure/fig1/AS:999328713822208@1615269940697/Comparison-of-BERT-OpenAI-GPT-and-ELMo-model-architectures-5.png)